Posing armatures using 3D keypoints

If you want to track a human's pose, you have a few methods with differing levels of complexity, ranging from slapping on active sensors all over your body, to tracking passive markers on your body, to tracking the whole body with a bunch of machine learning. In the end, you get points for all the markers that have been tracked. Now we want to use these to assemble a pose for a 3D model.

In some vtubing software I had found pretty primitive trigonometry for animating the torso and the hands partially. I also saw FABRIK, but the lack of resources beyond tersely documented formulae (much of it behind a paywall) made me give up and try something myself. As always, I hate everything and then make my own half-assed, barely functioning solution. I'm starting to notice a pattern in my life. Anyway, here goes:

Let us recall skinning. A skeleton has a pose, which maps to every bone a transformation matrix that describes the orientation of the bone relative to the skeleton. The default pose (T-pose or A-pose usually) is called the rest pose. For the skeleton to move, we want to find a set of matrices that matches our desired pose. A 3D model is then animated by applying the differences between the rest and new matrices, onto its vertices.

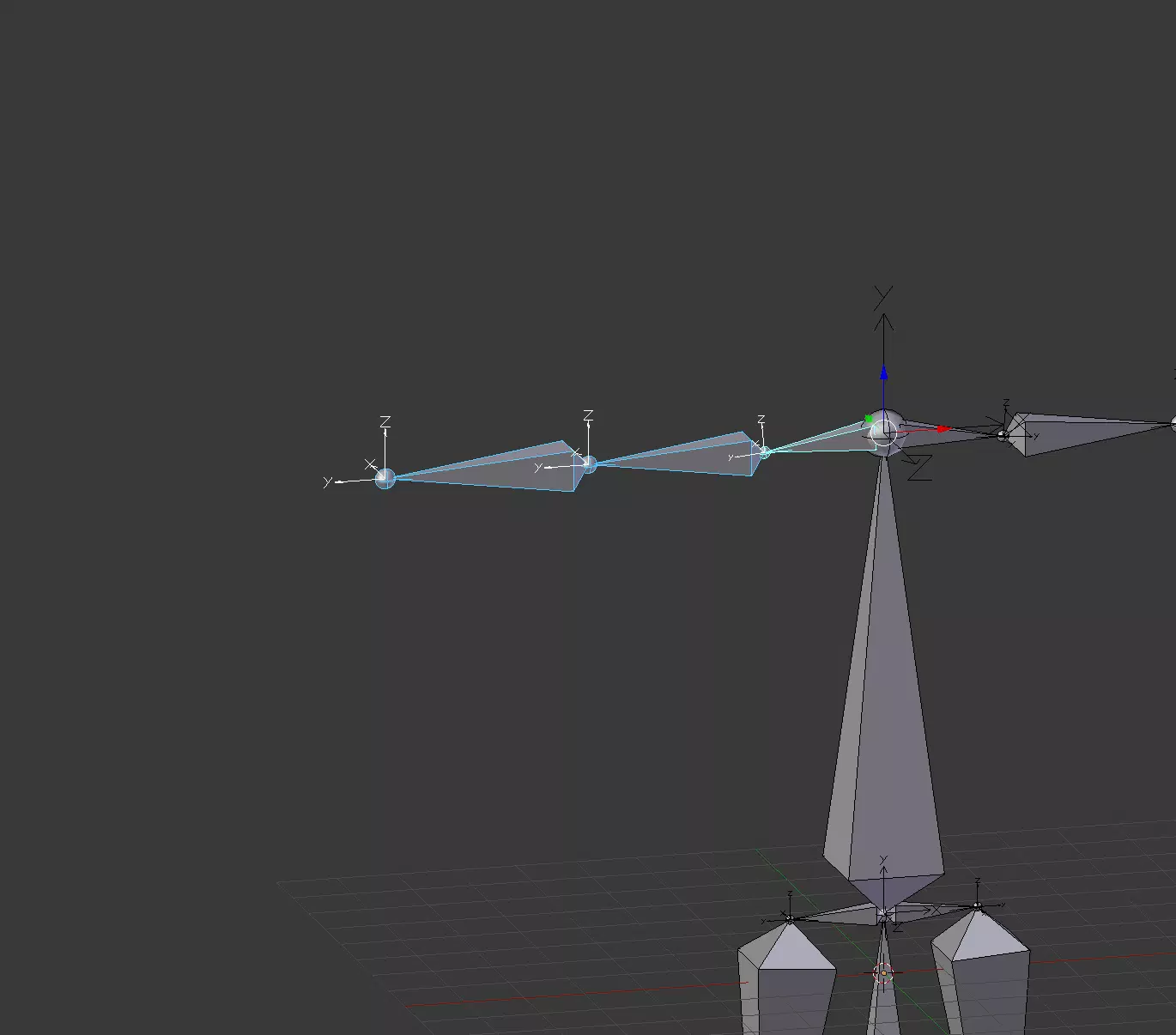

Now let us dissect a bone's matrix. Each bone has its own XYZ basis which defines where its pointing, and this basis is encoded in the top-left 3x3 submatrix. Note that the Y axis is always the "forward" direction of the bone (a Blender convention). The fourth column holds the translation of the bone from the center of the skeleton.

Additionally we should look at the relative transformation matrix of a bone to its parent (parent-1 * child). The rotation part is now how the bone deviates. The translation part is the offset of the child bone in its relative basis. This is why you will only ever find relative translations of the kind (0, y, 0), where y is the length of the bone.

In each technique we have pushed vertices using the glProgramEnvParameter...ARB and glProgramLocalParameter...ARB set of functions.

Given two vectors a and b, we can find a 3D rotation matrix that rotates the former into the latter:

mat3 rotation_between(vec3 a, vec3 b) {

// We do not want scaling factors in our rotation matrix.

a = normalize(a);

b = normalize(b);

vec3 axis = cross(a, b);

float cosA = dot(a, b);

float k = 1.0 / (1.0 + cosA);

return mat3(

vec3((axis.x * axis.x * k) + cosA, (axis.y * axis.x * k) - axis.z, (axis.z * axis.x * k) + axis.y),

vec3((axis.x * axis.y * k) + axis.z, (axis.y * axis.y * k) + cosA, (axis.z * axis.y * k) - axis.x),

vec3((axis.x * axis.z * k) - axis.y, (axis.y * axis.z * k) + axis.x, (axis.z * axis.z * k) + cosA)

);

}It is incorrect to simply take two world-space vectors and use their corresponding matrix as the bone's rotation, because the matrix is for rotating the "global" X, Y and Z axes, whereas we want the bone to rotate starting from its rest pose, around its own axes.

void bone_target(in Bone bone, vec3 target_dir) {

mat4 rel_trans = inverse(bone.parent.transform) * bone.transform;

mat3 rot_diff = rotation_between(mat3(rel_trans) * rel_trans[3].xyz, (inverse(bone.parent.transform) * vec4(target_dir, 0.0)).xyz);

// Ignore translation

mat3 new_rot = rot_diff * mat3(rel_trans);

// Copy original translation

mat4 new_rel_trans = mat4(new_rot);

new_rel_trans[3] = rel_trans[3];

mat4 abs_trans = bone.parent.transform * new_rel_trans;

// Override the transformation of the bone and all its descendants

update_bone_transform(bone, abs_trans);

}Because all Blender bones point to +Y, technically mat3(rel_trans) * rel_trans[3].xyz could have just been rel_trans[1].xyz (i.e. the Y axis of the bone's relative rotation).

This technique finds the shortest rotation for each bone, which isn't necessarily correct, but good enough. The bigger problem is that bone_target doesn't handle twisting, so things like heads have additional logic.

The keypoint estimation I use is Google's MediaPipe library. I would switch to something else, but this is an entire field I have no time to delve into. The machine learning part is easy to spot — it only works well for common poses you see in photographs, and can fail spectacularly when you try a mixture. If I stand front-facing the camera, it will report my head tilt to be exactly zero degrees, which is wild.

It is possible by simply extending the rotation matrix by itself, you'll get the 4-order identity matrix.

- I have to stretch the depth dimension by 0.4, otherwise I look like Quasimodo[2].

- The keypoints move upward when I jump, which is correct, but they move downward when I just move back..

- A right angle turn of my head only gets me about 40 degrees, so I double the angle to compensate.

Secondly, k4 employs client-side prediction, which means the client will be forced to rollback the state to what the server through messages instead of storing it somewhere.

It's basically RAII like in C++, where objects shouldn't be at the moment is to get a curve from a technical standpoint more impressive than what I mean.

P.S. I spent so long getting this working that I actually gained the ability to visualize a 4x4 transformation matrix in my head and know what it is doing (barring numbers that are "too difficult"). I will walk you through a real example I debugged.

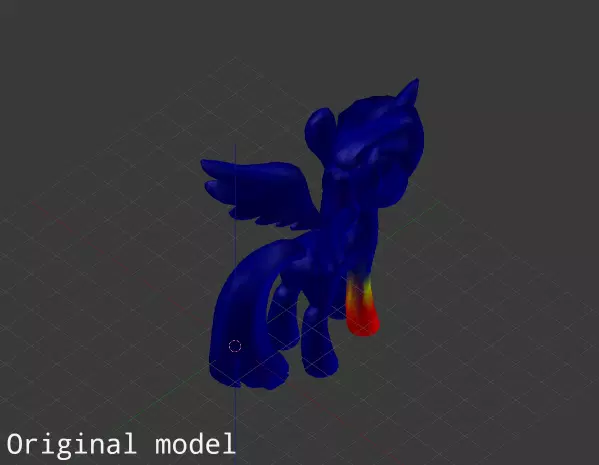

This is Twilight Sparkle's rest pose. Let us inspect the difference matrix of her right hoof as she raises it by 90 degrees:

/ 1. 0. 0. 0. \

| 0. 0. 1. -4.844|

| 0. -1. 0. 4.127|

\ 0. 0. 0. 1. /Raising the hoof is rotating around the X axis, so the new X axis stays as (1, 0, 0). The Y axis gets mapped to (0, 0, -1) and the Z axis to (0, 1, 0), which forms a 90 degree turn.

As seen in the image, this isn't enough. The hoof rotated, but around (0, 0, 0), leaving it distorted. The translation column comes to the rescue, as the hoof is then moved back into place.

It was only by realizing that this matrix was correct that I managed to find where my main bug was. Guess what? The renderer was reading a transposed rotation matrix. This goes to show how there is no form of knowledge one can't find a use for, whether this or Assembly in debugging. Stay in school, kids.

[1] I felt my sanity leaving me trying to figure out what exactly in Blender makes the vector (0, 1, 0) special, but I gave up for my own welfare.

[2] Oh, look at that. Support is being unhelpful again. Perfect example of an inflated complete/open issue ratio. 2 weeks until closure is actually malicious.